Guided-by-Gut Accepted to ACL’25

Published:

After a long time in the making, I’m happy to say that “Guided by Gut: Efficient Test-Time Scaling with Reinforced Intrinsic Confidence” has been accepted, as a main conference paper, to The 64th Annual Meeting of the Association of Computational Linguistic (ACL 2026) in San Diego! Further, the camera-ready version of the paper has been finalized and can be found here.

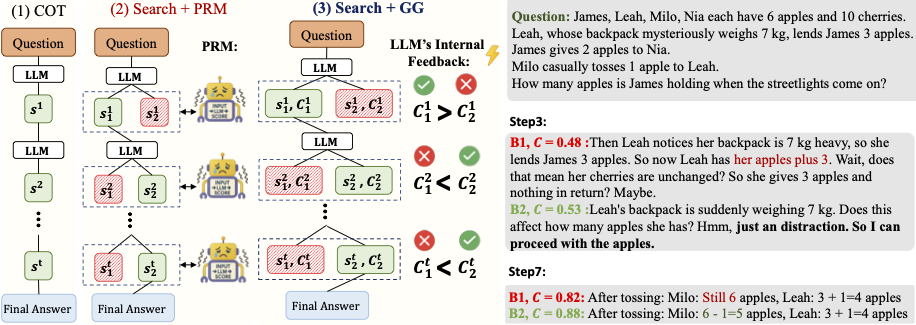

Guided by Gut, or ‘GG’ for short, is a Test-Time-Scaling (TTS) method for reasoning models. Specifically, GG performs a lightweight tree search guided solely by intrinsic confidence signals of a Large Language Model (LLM). This allows smaller, sub 10B param LLMs to compete with much larger, 70B+ parameter LLMs that utilize Chain-of-Thought (CoT) on reasoning tasks such as Mathematical and Logical problem solving.

Specifically, rather than relying on an external validator, such as a ProcessRewardModel PRM, GG uses LLM intrinsic internal confidence measures to determine exactly to what extent an autoregressive language model is confident in its own answer. This is leveraged with a robust tree-based search and RLHF methods such as GRPO in order to enable smaller LLMs, such as those which have <8B parameters and can be locally deployed, to compete with larger LLMs utilizing Chain-of-Thought CoT approaches. This further enables us to achieve hardware savings such as 50% KVCache memory reduction during reasoning.

See you in San Diego in July!